Artificial intelligence has moved beyond experimental technology into the operational core of modern businesses. The professionals who understand how to build with AI—not just use it—are creating measurable competitive advantages in every industry. Yet most discussions around AI literacy focus on surface-level tool adoption rather than the deeper technical and strategic capabilities that compound over time.

The gap between knowing AI exists and knowing how to architect AI systems is widening. Companies are no longer impressed by someone who can generate content with ChatGPT. They need people who can design retrieval systems, evaluate model performance, automate complex workflows, and optimize for AI-driven discovery. These are not futuristic concerns—they are current hiring priorities.

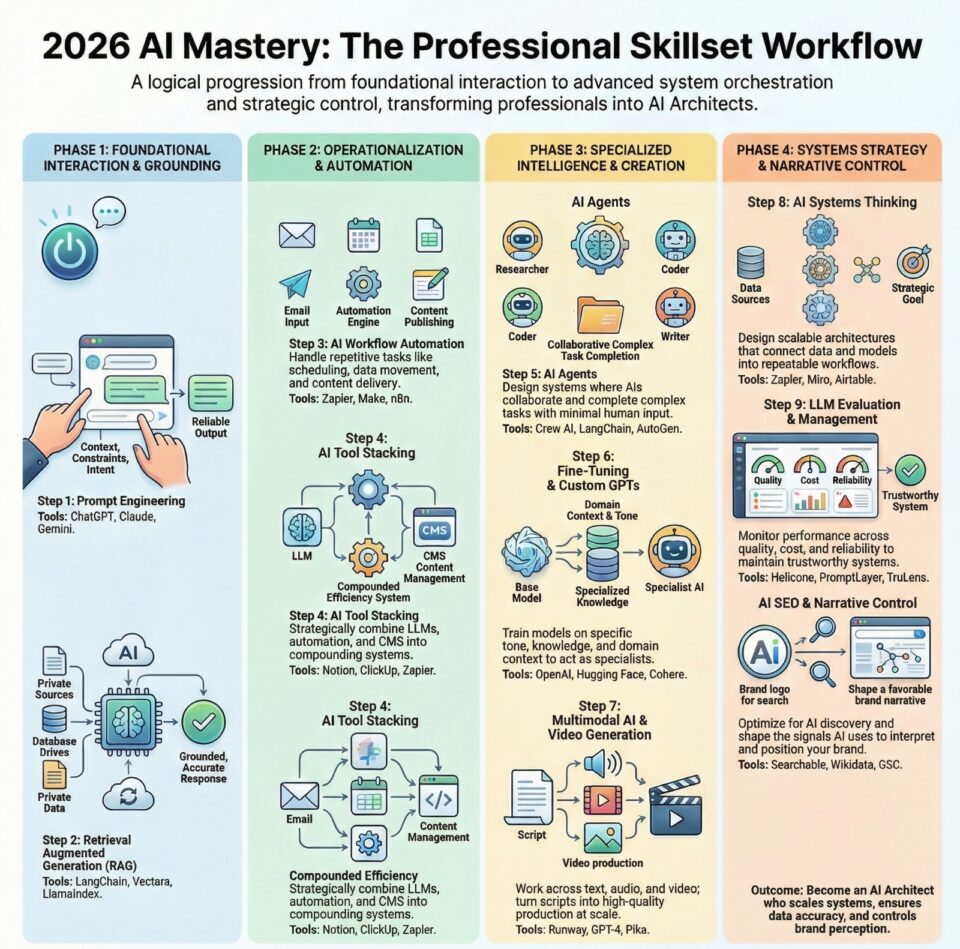

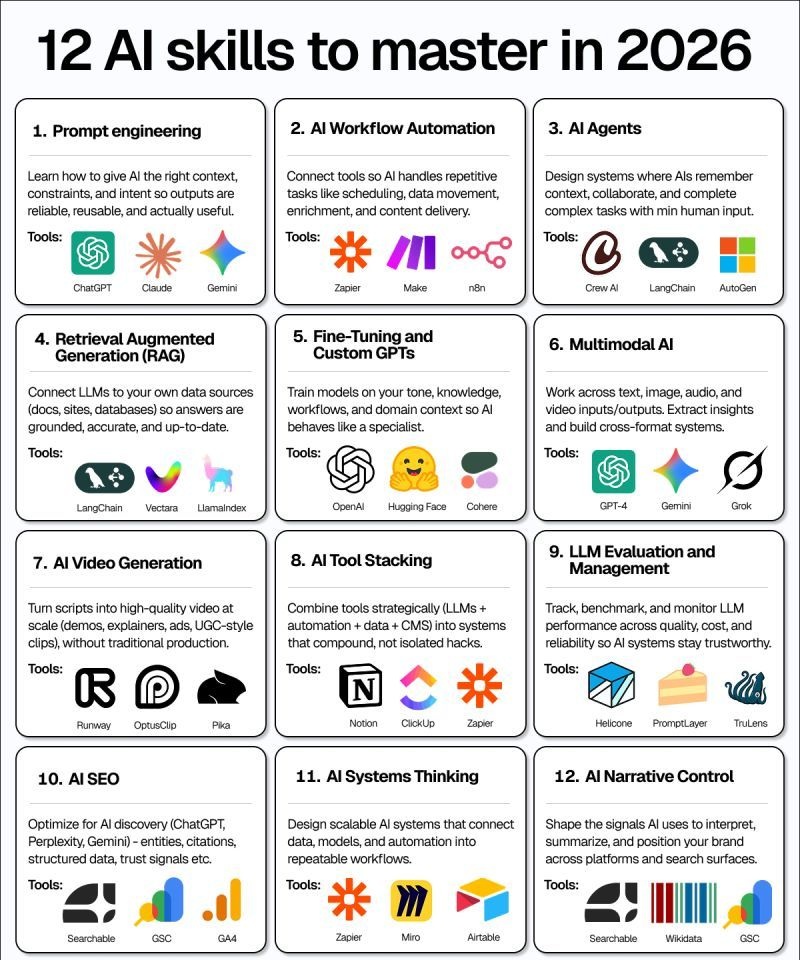

This guide covers twelve foundational AI skills that deliver long-term career leverage. Each section includes practical definitions, business applications, relevant platforms, and actionable learning paths. Whether the goal is to build AI agents without coding, understand how to learn prompt engineering for beginners, or explore fine tuning LLM models for niche industries, the focus remains on skills that remain valuable as models evolve and tools change. Strategic AI capability, not tool familiarity, is what creates lasting professional advantage.

What Are the Most Important AI Skills to Learn?

The most valuable AI skills combine technical implementation with strategic business application. Here are the twelve essential capabilities:

- Prompt Engineering: Structuring inputs to generate reliable, contextual outputs from language models

- AI Workflow Automation: Connecting tools to handle repetitive tasks across platforms automatically

- AI Agents: Building systems that maintain context and complete multi-step tasks autonomously

- Retrieval Augmented Generation: Connecting LLMs to proprietary data sources for accurate, grounded responses

- Fine-Tuning and Custom GPTs: Training models on domain-specific knowledge and organizational context

- Multimodal AI: Working across text, image, audio, and video inputs and outputs simultaneously

- AI Video Generation: Producing high-quality video content from scripts without traditional production

- AI Tool Stacking: Combining multiple platforms into integrated systems that compound effectiveness

- LLM Evaluation and Management: Tracking performance, cost, and reliability across model deployments

- AI SEO: Optimizing content and brand signals for discovery in AI-powered search engines

- AI Systems Thinking: Designing scalable architectures that connect data, models, and automation

- AI Narrative Control: Shaping how AI systems interpret, summarize, and represent brand information

1. Prompt Engineering

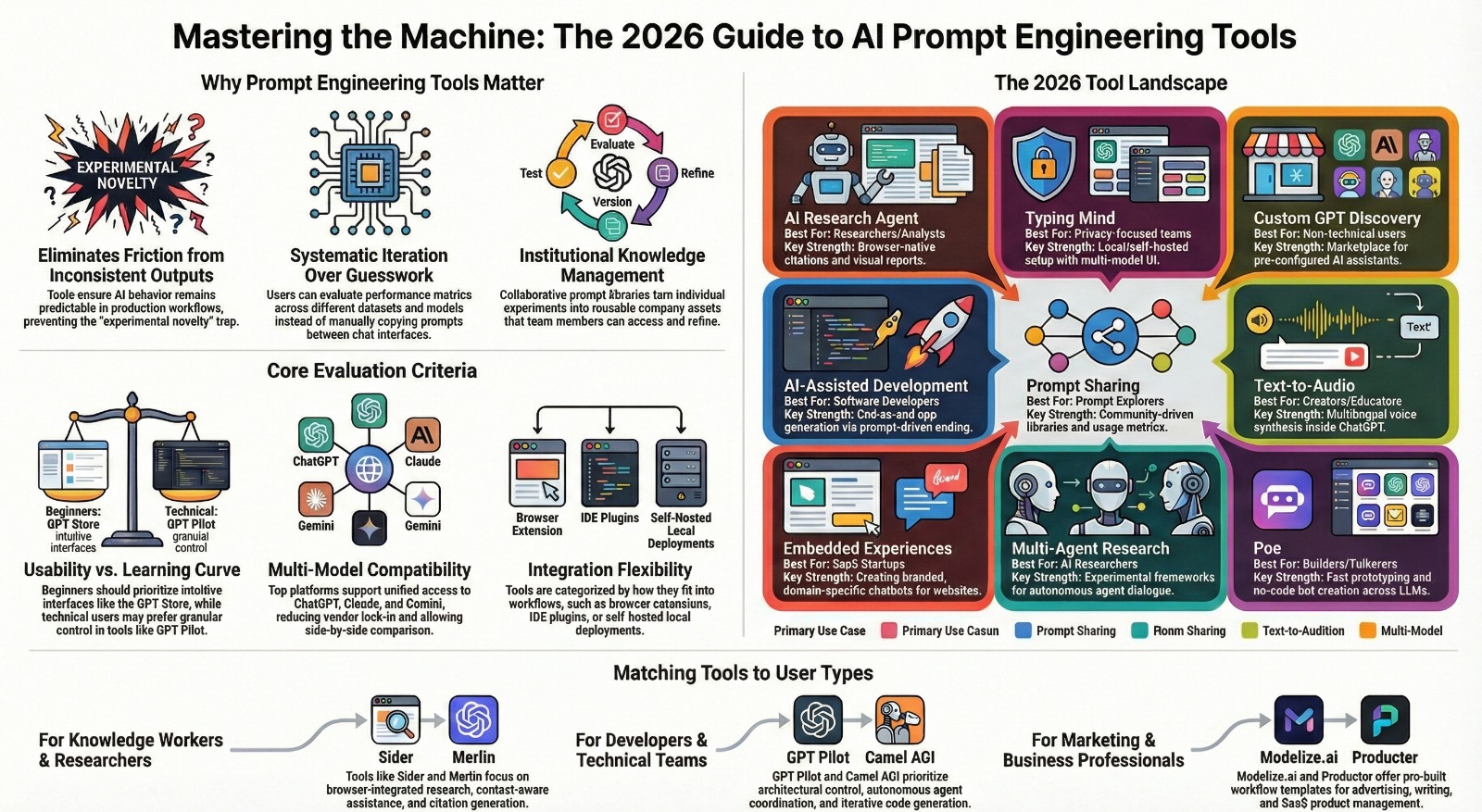

Prompt engineering is the practice of structuring instructions, context, and constraints to produce consistent, high-quality outputs from large language models. Rather than treating AI as a search engine, effective prompt engineering treats it as a collaborative system that needs clear intent, relevant examples, and explicit formatting requirements. The discipline combines technical precision with linguistic clarity—understanding both how models process language and how to communicate goals effectively.

Many beginners approach prompts as casual requests, leading to inconsistent results that require constant regeneration. Professional prompt engineering involves defining roles, providing contextual background, specifying output structure, and including constraint boundaries. This systematic approach transforms AI from an unpredictable tool into a reliable system component. For those exploring how to learn prompt engineering for beginners, the transition from basic queries to structured prompts represents the first major skill progression.

Why It Matters

Organizations waste significant resources on prompt iteration because teams lack fundamental engineering discipline. A well-designed prompt system reduces token costs, improves output consistency, and enables non-technical teams to use AI effectively. Marketing teams can generate brand-consistent content. Product teams can automate documentation. Support teams can handle complex customer inquiries. Each use case requires understanding how models interpret instructions, where they hallucinate, and how to constrain outputs without limiting usefulness.

Consider a customer support scenario where generic prompts produce legally risky responses. A properly engineered system includes company policies as context, defines acceptable response boundaries, requires citation of specific help articles, and formats outputs for immediate use. The difference between amateur and professional prompt engineering shows up in production reliability, not demonstration quality.

Tools and Platforms

ChatGPT, Claude, and Gemini represent the primary platforms for developing prompt engineering skills. Each model has different instruction-following characteristics, context window limitations, and reasoning capabilities. ChatGPT excels at creative tasks and conversational depth. Claude handles longer documents and nuanced reasoning. Gemini integrates multimodal inputs effectively. Understanding these differences allows prompt engineers to match models to use cases rather than forcing every task through a single system.

Specialized platforms like PromptBase and PromptLayer provide version control, testing frameworks, and collaborative editing environments for production prompt systems. These tools matter when prompts become organizational assets rather than individual experiments.

How to Start Learning

Begin with the constraint-first method: define exactly what the output should look like before writing the prompt. Create examples of perfect responses, then work backward to identify what context and instructions produce that quality consistently. Practice with structured tasks—data transformation, content formatting, information extraction—before attempting creative work.

Document every successful prompt pattern. Build a personal library of templates for common tasks: summarization, analysis, generation, transformation. Test each prompt with edge cases and unusual inputs to understand failure modes. The goal is not finding magical prompts but developing systematic approaches that work reliably across different scenarios. Advanced practitioners eventually develop mental models for how different instruction types affect outputs, enabling rapid iteration and adaptation to new model capabilities.

2. AI Workflow Automation

AI workflow automation connects multiple systems and tools so that AI handles repetitive, multi-step processes without human intervention. Unlike simple task automation, AI-powered workflows make decisions, adapt to context, and handle exceptions intelligently. These systems monitor triggers, process information across platforms, execute actions based on conditions, and route results to appropriate destinations. The combination of traditional automation logic with AI decision-making creates systems that feel adaptive rather than rigidly programmed.

AI workflow automation connects multiple systems and tools so that AI handles repetitive, multi-step processes without human intervention. Unlike simple task automation, AI-powered workflows make decisions, adapt to context, and handle exceptions intelligently. These systems monitor triggers, process information across platforms, execute actions based on conditions, and route results to appropriate destinations. The combination of traditional automation logic with AI decision-making creates systems that feel adaptive rather than rigidly programmed.

Most businesses still rely on manual data movement between platforms—copying information from emails to CRMs, updating spreadsheets from forms, generating reports from multiple sources. These tasks consume hours daily while introducing human error. AI workflow automation tools for small businesses and enterprises alike eliminate this friction by creating intelligent pipelines that understand content, make routing decisions, and maintain data consistency across systems.

Why It Matters

Time compression is the immediate benefit. Workflows that take employees 30 minutes to complete manually run in seconds when automated. Sales teams spend time selling instead of data entry. Marketing teams focus on strategy instead of campaign mechanics. Operations teams handle exceptions instead of routine processing. The productivity gains are straightforward mathematics—hours returned to higher-value activities.

The strategic benefit is consistency. Automated workflows execute perfectly every time, following defined logic without fatigue or distraction. Lead qualification happens uniformly. Content distribution follows brand guidelines. Customer communications maintain appropriate tone. When AI workflow automation tools for small businesses replace manual processes, quality becomes predictable rather than variable. A small e-commerce business can process orders, update inventory, generate shipping labels, and send confirmation emails without touching individual transactions.

Tools and Platforms

Zapier remains the most accessible entry point for workflow automation, offering pre-built integrations and a visual interface that requires no coding knowledge. Make (formerly Integromat) provides more sophisticated logic and branching for complex workflows. n8n offers self-hosted flexibility for organizations with data security requirements. Each platform connects hundreds of applications through API integrations, allowing data to flow between CRMs, email systems, databases, spreadsheets, and communication tools.

These platforms increasingly incorporate AI capabilities directly—sentiment analysis for routing, content generation for personalization, entity extraction for data processing. The combination of workflow logic and AI processing creates systems that handle nuanced tasks previously requiring human judgment.

How to Start Learning

Identify a single repetitive task that involves moving information between two platforms. Document every step currently performed manually. Map the data transformation that occurs—what fields need matching, what format conversions happen, what decisions get made. Build a simple two-step automation that replicates this process, then test with real data to identify edge cases.

Gradually increase complexity by adding conditional logic, data enrichment, and error handling. Learn to think in terms of triggers, actions, and filters rather than linear task sequences. The progression from simple automations to complex workflows happens through iteration—each successful automation reveals opportunities for connecting additional systems. Advanced practitioners eventually design entire business processes as interconnected workflows, treating manual intervention as the exception rather than the default.

3. AI Agents

AI agents are autonomous systems designed to perceive goals, make decisions, take actions, and learn from results without requiring step-by-step human instruction. Unlike simple chatbots that respond to individual queries, agents maintain context across interactions, access external tools and data sources, break complex objectives into subtasks, and adapt strategies based on outcomes. These systems represent a fundamental shift from AI as a reactive tool to AI as a proactive participant in achieving objectives.

The distinction matters because agents can complete work rather than just assist with it. A customer service chatbot answers questions. A customer service agent resolves issues by checking order status, processing refunds, updating account information, and coordinating with fulfillment systems. The architecture requires orchestrating multiple AI calls, managing state across interactions, handling tool execution, and maintaining safety boundaries. For those exploring how to build AI agents without coding, no-code platforms now abstract much of this complexity.

Why It Matters

Agents compress the time between intention and completion. Instead of manually executing a research project—searching sources, extracting information, synthesizing findings, formatting reports—an agent system handles the entire pipeline with oversight rather than constant direction. Marketing teams deploy agents to monitor brand mentions, analyze sentiment, draft responses, and schedule posts. Product teams use agents to aggregate user feedback, identify patterns, generate insights, and update documentation.

The business impact comes from task completion rates. Humans get interrupted, context-switch, and forget subtasks. Agents execute comprehensively, following defined processes until objectives are met or blockers are identified. A sales agent can qualify leads, research companies, draft personalized outreach, schedule follow-ups, and update CRM records—the entire lead development process running continuously rather than intermittently.

Tools and Platforms

CrewAI provides a framework for building multi-agent systems where specialized agents collaborate on complex tasks, each with defined roles and expertise. LangChain offers the foundational components for building agent systems—memory management, tool integration, reasoning loops, and execution monitoring. AutoGen from Microsoft enables agent conversations and collaborative problem-solving. Each platform approaches agent architecture differently, but all share the core capability of autonomous task execution.

These frameworks handle the infrastructure challenges—maintaining conversation history, managing API calls, coordinating tool usage, implementing error recovery. Builders focus on defining agent behavior, tool availability, and success criteria rather than low-level orchestration.

How to Start Learning

Start by designing a single-agent system for a bounded task with clear success criteria. Research assistant agents work well as first projects—given a topic, the agent searches sources, extracts relevant information, synthesizes findings, and generates a formatted report. Define the tools the agent can access (search, extraction, summarization), specify the output structure, and establish quality checks.

Build iteratively, testing each component independently before connecting them into an autonomous loop. Monitor agent reasoning to understand decision-making patterns and failure modes. Gradually increase task complexity and reduce human intervention. The progression from monitored execution to autonomous operation requires developing trust in agent reliability—something only achieved through systematic testing and refinement. Advanced agent builders eventually design multi-agent systems where specialized agents collaborate, each contributing domain expertise to complex objectives.

4. Retrieval Augmented Generation

Retrieval Augmented Generation, commonly referred to as RAG, combines large language models with external knowledge retrieval systems to produce responses grounded in specific, verifiable information. Instead of relying solely on the model’s training data, RAG systems query relevant documents, databases, or knowledge bases during response generation, incorporating retrieved context into answers. This architecture solves the fundamental LLM challenge of hallucination while enabling AI systems to work with proprietary information, current data, and domain-specific knowledge not present in base models.

The technical process involves converting documents into embeddings, storing them in vector databases, performing semantic search to find relevant passages, and injecting retrieved context into prompts before generation. Retrieval augmented generation explained simply: the model reads relevant documents in real-time before answering, ensuring responses reflect actual information rather than statistical patterns. This approach enables AI systems to answer questions about internal policies, product documentation, customer data, and specialized knowledge with factual accuracy.

Why It Matters

RAG transforms unreliable AI assistants into trustworthy information systems. Legal teams can query contract databases knowing responses cite actual agreements. Medical practices can provide patient information grounded in specific records. Enterprise support teams can answer product questions using current documentation. The difference between base LLMs and RAG systems is the difference between educated guessing and referenced answers.

Cost efficiency is the secondary benefit. Fine-tuning models on proprietary data is expensive and requires retraining as information changes. RAG systems update by adding new documents to the knowledge base—no model retraining required. A company can maintain current AI capabilities by updating document collections rather than managing model versions. This architecture also enables precise attribution, showing which sources informed each response, enabling human verification of AI outputs.

Tools and Platforms

LangChain provides comprehensive RAG implementation frameworks, handling document loading, embedding generation, vector storage, retrieval logic, and prompt construction. Vectara offers managed RAG infrastructure, eliminating the need to maintain vector databases and embedding pipelines. LlamaIndex specializes in connecting LLMs to diverse data sources—APIs, databases, file systems—with sophisticated retrieval strategies for complex information architectures.

Vector databases like Pinecone, Weaviate, and Chroma store document embeddings and enable semantic search at scale. These systems match the meaning of queries to relevant passages rather than relying on keyword matching, enabling natural language questions against technical documentation or unstructured data.

How to Start Learning

Begin with a focused document collection—company wikis, product documentation, research papers—and implement basic semantic search. Convert documents into chunks, generate embeddings using available models (OpenAI, Cohere, open-source alternatives), store vectors in a simple database, and test retrieval quality with diverse questions. Evaluate whether the system returns relevant passages before attempting response generation.

Once retrieval works reliably, integrate with an LLM by constructing prompts that include retrieved context and instruct the model to base answers on provided information. Test boundary cases: questions with no relevant documents, contradictory information across sources, queries requiring synthesis across multiple passages. Refine chunk size, embedding models, and retrieval strategies based on performance. Advanced implementations eventually incorporate hybrid search (combining semantic and keyword matching), reranking algorithms, and source citation mechanisms that link every statement to supporting documents.

5. Fine-Tuning and Custom GPTs

Fine-tuning involves training pre-existing language models on domain-specific data to adapt behavior, tone, formatting, and domain knowledge to particular use cases. Rather than building models from scratch, fine-tuning starts with capable foundation models and adjusts them through continued training on curated datasets. Custom GPTs represent a lighter-weight approach, using instruction tuning and example-based learning to shape model behavior without full fine-tuning overhead. Both approaches solve the problem of generic AI that lacks specialized knowledge or organizational context.

The distinction between base models and fine-tuned versions shows up in consistency, accuracy, and usability. A base model might produce medically accurate content but in inappropriate clinical language. A fine-tuned model trained on patient communication examples produces accurate information in accessible language. Fine tuning LLM models for niche industries enables AI systems that understand industry terminology, follow regulatory requirements, and maintain brand voice without extensive prompting.

Why It Matters

Generic models trained on internet-scale data excel at general tasks but struggle with specialized domains. Legal models need understanding of jurisdiction-specific precedent. Medical models require knowledge of institutional protocols. Financial models must handle proprietary risk frameworks. Fine-tuning embeds this knowledge directly into model weights, making specialized behavior the default rather than requiring complex prompts for every interaction.

Custom behavior becomes portable. A properly fine-tuned model maintains consistent tone, structure, and domain knowledge across all interactions without users needing to understand prompt engineering. Support teams, content creators, and analysts can use specialized models as naturally as base models, with expertise built in rather than manually specified. A customer service model fine-tuned on past interactions and resolution patterns provides better guidance than any prompt-engineered base model.

Tools and Platforms

OpenAI provides fine-tuning capabilities for GPT models through their API, enabling organizations to train on proprietary data while leveraging foundation model capabilities. Hugging Face offers extensive fine-tuning infrastructure for open-source models, including pre-training scripts, dataset management, and model hosting. Cohere specializes in enterprise fine-tuning with data privacy guarantees, enabling training on sensitive information without external data exposure.

The process involves preparing training datasets (typically thousands of input-output examples), selecting appropriate hyperparameters, managing training runs, evaluating model performance, and deploying updated models into production systems. Modern platforms handle much of the technical complexity, focusing user effort on dataset quality and evaluation frameworks.

How to Start Learning

Start with the dataset before touching models. Collect examples that represent desired behavior—customer service conversations, technical documentation, branded content, domain-specific analysis. Evaluate dataset quality, consistency, and coverage. Poor training data produces unreliable models regardless of technical implementation. Aim for hundreds of high-quality examples before attempting fine-tuning.

Use OpenAI’s fine-tuning API for initial experiments, as it abstracts infrastructure complexity and provides clear performance metrics. Train a model on a focused task with clear success criteria, then systematically evaluate outputs against base model performance. Document where fine-tuning improves results and where it introduces problems. Understanding the relationship between training data patterns and model behavior is more valuable than technical infrastructure knowledge. Advanced practitioners eventually manage multi-stage training pipelines, combining fine-tuning with reinforcement learning from human feedback and continuous model improvement based on production usage.

6. Multimodal AI

Multimodal AI refers to systems that process and generate content across multiple formats—text, images, audio, and video—either simultaneously or in integrated workflows. Unlike single-modality models that handle only text or only images, multimodal systems understand relationships between different content types, enabling tasks like visual question answering, audio transcription with context awareness, and video generation from text descriptions. These capabilities mirror human perception, which naturally integrates information from multiple senses rather than processing them in isolation.

The business applications extend beyond individual format processing to cross-modal understanding. Multimodal AI use cases for business include analyzing video call recordings to generate meeting summaries with visual context, processing product images to generate detailed descriptions, transcribing audio with speaker identification and sentiment analysis, and creating video content from text scripts with appropriate visual elements. The integration of modalities enables richer analysis and more sophisticated automation than single-format approaches.

Why It Matters

Most business information exists across multiple formats. Customer interactions happen through text, voice, and video. Product information includes specifications, images, and demonstration videos. Training materials combine presentations, audio narration, and visual examples. Processing this information effectively requires systems that handle all modalities cohesively rather than as separate inputs requiring manual coordination.

Multimodal capabilities compress workflows that previously required multiple specialized tools. A content team can generate blog posts from video transcripts, create social media images from article content, produce video clips from written scripts, and generate audio versions of text—all within integrated systems rather than disconnected tools. The efficiency gain comes from eliminating manual format translation and enabling direct content transformation across mediums. Marketing teams exploring multimodal AI use cases for business often discover that cross-format content production becomes their highest-value application.

Tools and Platforms

GPT-4 includes vision capabilities, enabling text-based questions about images, document analysis from photos, and visual reasoning tasks. Gemini handles text, images, audio, and video natively, processing multiple formats within single interactions. Grok integrates real-time image generation with conversational AI, enabling visual ideation during text conversations. Each platform implements multimodality differently, with varying strengths across format combinations.

Specialized tools handle specific multimodal tasks—Whisper for audio transcription, DALL-E and Midjourney for image generation, Runway and Pika for video creation. The trend moves toward integrated platforms where format boundaries disappear, enabling natural workflows that incorporate whichever modalities best serve the task.

How to Start Learning

Experiment with simple cross-modal tasks: upload an image and ask detailed questions about its content, generate images from text descriptions, transcribe audio and analyze sentiment, extract structured data from photos of documents. Evaluate quality, limitations, and appropriate use cases for each modality combination. Understanding where multimodal processing excels and where it struggles informs effective system design.

Build a workflow that combines multiple modalities toward a single outcome. For example, take a product image, generate a detailed description, create marketing copy variations, produce a short video script, and generate accompanying visuals. Track quality degradation across format transitions and identify where human review remains necessary. Advanced multimodal work involves designing systems where format selection happens dynamically based on content requirements, audience preferences, and distribution channels—treating modality as a strategic choice rather than a technical constraint.

7. AI Video Generation

AI video generation refers to tools and techniques that produce video content from text scripts, images, or other inputs without traditional filming, editing, or production infrastructure. These systems handle visual generation, motion synthesis, scene composition, transitions, effects, and audio integration, compressing workflows that previously required extensive equipment, expertise, and time. The output ranges from simple slideshows with voiceover to sophisticated generated scenes with realistic motion and professional production quality.

The technology has progressed from novelty demonstrations to practical business applications. AI video generation tools for marketing teams enable rapid content production for social media, product demonstrations, educational content, and advertising campaigns. Rather than replacing video production entirely, these tools democratize content creation, enabling organizations to produce more video at lower cost while reserving traditional production for high-stakes content requiring unique creative direction.

Why It Matters

Video dominates digital engagement metrics across platforms, yet production bottlenecks limit most organizations to minimal video output. Scripting, filming, editing, revision, and distribution require coordinated effort from multiple specialists. AI generation removes production constraints, enabling marketing teams, educators, and content creators to produce video as easily as writing documents. The barrier to video content drops from production capability to strategic decisions about what messages deserve video treatment.

Speed and iteration cycles compress dramatically. Traditional production measured in days or weeks becomes possible in hours. Testing multiple video variations for different audiences shifts from prohibitively expensive to standard practice. A marketing team exploring AI video generation tools for marketing teams can produce dozens of social media videos weekly, testing messaging, pacing, and visual styles based on performance data rather than limited by production capacity.

Tools and Platforms

Runway provides comprehensive video editing and generation capabilities, including text-to-video, image-to-video, video inpainting, and motion tracking. OpusClip specializes in transforming long-form content into short clips optimized for social media, automatically identifying engaging moments and reframing for vertical formats. Pika focuses on controllable video generation from text prompts, enabling precise direction over visual style, motion, and scene composition.

Each platform approaches generation differently. Some prioritize creative control and sophisticated editing. Others optimize for speed and volume. Some handle live-action styles while others excel at animation and motion graphics. Understanding these differences enables matching tools to specific content requirements rather than forcing all video through a single system.

How to Start Learning

Begin with simple script-to-video projects using existing platforms. Write a 30-second product description, generate corresponding video, evaluate quality and usability. Test different visual styles, pacing options, and format variations. Focus initially on where generated video creates value rather than pursuing technical sophistication. Many teams discover that simple, high-volume video outperforms elaborate productions in engagement metrics.

Develop systematic approaches to scripting, visual direction, and quality evaluation. Document what works—video length, scene composition, motion types, text overlay styles, audio choices. Build templates for common video types (product features, customer testimonials, educational explainers, social media clips) that streamline production. Advanced usage involves integrating video generation into larger content workflows where written content automatically produces corresponding video versions, subject lines generate ad variations, and customer data personalizes video outreach at scale.

8. AI Tool Stacking

AI tool stacking refers to strategically combining multiple AI platforms, automation systems, and data sources into integrated workflows that compound effectiveness rather than operating in isolation. Instead of using individual tools for separate tasks, stacking creates pipelines where outputs from one system become inputs for another, data flows automatically between platforms, and multiple AI capabilities combine to handle complex objectives. The approach treats individual tools as components within larger systems rather than standalone solutions.

AI tool stacking refers to strategically combining multiple AI platforms, automation systems, and data sources into integrated workflows that compound effectiveness rather than operating in isolation. Instead of using individual tools for separate tasks, stacking creates pipelines where outputs from one system become inputs for another, data flows automatically between platforms, and multiple AI capabilities combine to handle complex objectives. The approach treats individual tools as components within larger systems rather than standalone solutions.

Most organizations accumulate AI tools reactively—adding capabilities as needs arise without designing coherent architectures. This produces tool sprawl where teams maintain dozens of subscriptions with minimal integration, requiring constant context switching and manual data transfer. How to stack AI tools for productivity involves designing deliberate systems where tools complement each other’s strengths, share data efficiently, and automate handoffs between processing stages.

Why It Matters

Isolated tools deliver linear benefits. Integrated stacks deliver compounding returns. A content team using ChatGPT generates drafts. Adding Grammarly improves quality. Connecting to a CMS enables publishing. Integrating analytics informs optimization. Linking to social scheduling automates distribution. Each addition multiplies rather than adds value, creating systems that handle entire workflows rather than individual steps. The productivity gains from how to stack AI tools for productivity come from eliminating friction between steps and enabling seamless handoffs.

Strategic stacking also reduces costs by avoiding functional duplication. Many AI platforms offer overlapping capabilities—document analysis, content generation, data extraction. Thoughtful stacking identifies the best tool for each specific function rather than paying for redundant features across multiple subscriptions. An effective stack uses fewer tools more deeply rather than more tools superficially.

Tools and Platforms

Notion serves as a central knowledge hub that connects to AI writing assistants, task automation, and collaborative editing. ClickUp integrates project management with AI-powered task generation, status updates, and reporting. Zapier and Make act as connective tissue, moving data between specialized tools and triggering AI processing based on events across platforms. Airtable combines database functionality with automation and AI capabilities for structured information management.

The power comes from connection rather than individual capabilities. Each platform’s API enables integration with other systems, allowing data to flow automatically and actions to trigger across tools. Modern stacks treat APIs as first-class features, designing workflows that span platforms rather than remaining confined within single applications.

How to Start Learning

Map current tool usage and identify integration opportunities. Document where information gets manually transferred between systems, where duplicate data entry occurs, and where context gets lost during handoffs. These friction points represent stacking opportunities. Start with a two-tool integration that eliminates a specific manual process, then gradually expand connections.

Learn API basics and automation platforms to enable custom integrations beyond pre-built connections. Understanding webhooks, authentication, data transformation, and error handling unlocks sophisticated stacking that goes beyond surface-level tool combinations. Advanced practitioners design entire business operations as integrated stacks where AI, automation, and human oversight combine seamlessly, with tools selected based on specific functional strengths rather than marketing claims about comprehensiveness.

9. LLM Evaluation and Management

LLM evaluation and management encompasses the practices, frameworks, and tools used to monitor, benchmark, and optimize large language model performance in production environments. Unlike software testing that checks for binary correctness, LLM evaluation assesses quality, relevance, factual accuracy, safety, cost efficiency, and reliability across diverse inputs and use cases. Management involves version control, prompt tracking, usage monitoring, cost analysis, and continuous improvement based on production data. These disciplines transform experimental AI implementations into trustworthy operational systems.

LLM evaluation and management encompasses the practices, frameworks, and tools used to monitor, benchmark, and optimize large language model performance in production environments. Unlike software testing that checks for binary correctness, LLM evaluation assesses quality, relevance, factual accuracy, safety, cost efficiency, and reliability across diverse inputs and use cases. Management involves version control, prompt tracking, usage monitoring, cost analysis, and continuous improvement based on production data. These disciplines transform experimental AI implementations into trustworthy operational systems.

Most organizations deploy LLMs without systematic evaluation, relying on subjective quality assessments and reactive debugging. This approach works for low-stakes applications but fails for systems where reliability matters. LLM evaluation frameworks for startups provide structured approaches to measuring model performance, comparing options, detecting regressions, and ensuring consistent quality as systems evolve.

Why It Matters

Production AI systems degrade invisibly. Models change, edge cases emerge, prompts drift, costs escalate, and quality slips without obvious indicators. Systematic evaluation catches these problems before they impact users or business outcomes. A customer service AI that slowly becomes more verbose wastes tokens and user patience. Evaluation metrics surface this trend early, enabling correction before significant impact.

Cost management is the secondary imperative. Token usage compounds across interactions, making seemingly small inefficiencies expensive at scale. Evaluation frameworks track cost per interaction, identify optimization opportunities, and justify model selection decisions with concrete performance data. Organizations exploring LLM evaluation frameworks for startups often discover that systematic monitoring reveals cost savings exceeding evaluation infrastructure investment.

Tools and Platforms

Helicone provides observability and monitoring for LLM applications, tracking prompts, responses, costs, latencies, and user feedback in production. PromptLayer offers version control for prompts, enabling teams to test variations, track changes, and understand performance impacts across model updates. TruLens focuses on evaluation frameworks that assess truthfulness, relevance, and groundedness of LLM outputs, particularly for retrieval-augmented systems.

These platforms handle logging infrastructure, metric calculation, visualization, and alerting, enabling teams to focus on interpretation and improvement rather than measurement mechanics. Integration typically requires adding a few lines of code to existing LLM calls, making adoption straightforward for teams already deploying AI systems.

How to Start Learning

Define quality metrics for a specific use case before implementing evaluation infrastructure. What makes a good response? Accuracy? Brevity? Tone? Factual groundedness? Citation quality? Specific metrics depend on application context—customer support prioritizes accuracy and politeness, content generation prioritizes creativity and structure, data extraction prioritizes precision and recall. Quantify these qualities to enable systematic measurement.

Implement basic logging for all LLM interactions, capturing prompts, responses, model selection, and metadata. Analyze patterns in successful and unsuccessful interactions. Build evaluation datasets from production examples, including both high-quality outputs and failures. Test new prompts and models against these benchmarks before deploying changes. Advanced evaluation practices involve automated testing pipelines, A/B testing frameworks, and continuous monitoring that surfaces quality issues as they emerge, enabling rapid response before widespread impact.

10. AI SEO

AI SEO refers to optimizing content, brand signals, and online presence for discovery and prominence in AI-powered search engines and answer systems like ChatGPT, Perplexity, Google’s AI Overviews, and Claude. Unlike traditional SEO that optimizes for ranking in result lists, AI SEO focuses on being selected as source material for generated responses, cited in AI-generated summaries, and accurately represented when AI systems reference a brand or expertise area. The discipline combines technical optimization with content authority and structured information architecture.

AI SEO refers to optimizing content, brand signals, and online presence for discovery and prominence in AI-powered search engines and answer systems like ChatGPT, Perplexity, Google’s AI Overviews, and Claude. Unlike traditional SEO that optimizes for ranking in result lists, AI SEO focuses on being selected as source material for generated responses, cited in AI-generated summaries, and accurately represented when AI systems reference a brand or expertise area. The discipline combines technical optimization with content authority and structured information architecture.

The shift matters because AI search engines don’t present ranked lists—they synthesize answers from multiple sources and present conclusions directly. Being the tenth search result becomes irrelevant if the AI generates answers without surfacing individual sources. Success requires becoming a cited authority rather than achieving ranking positions. Organizations must optimize for ChatGPT search results and improve visibility in AI answers rather than traditional search engine rankings.

Why It Matters

AI-powered search is rapidly becoming the default information retrieval method. Users increasingly trust ChatGPT, Perplexity, and Google’s AI features to provide direct answers rather than navigating search results. Brands that don’t appear in AI-generated responses become invisible regardless of traditional SEO performance. Being omitted from AI answers means being excluded from customer awareness, consideration, and decision processes.

The opportunity for early movers is significant. AI search engine optimization strategies remain relatively unsophisticated across most industries, creating temporary advantages for organizations that invest in entity optimization, structured data, citation-worthy content, and brand authority signals. The technical approaches differ enough from traditional SEO that existing rankings don’t automatically translate to AI visibility, enabling strategic repositioning.

Tools and Platforms

Searchable provides AI search monitoring and optimization, tracking brand mentions across AI platforms, analyzing citation patterns, and identifying optimization opportunities. Google Search Console remains essential for understanding how Google’s AI features use content and which queries trigger featured snippets or AI overviews. Google Analytics 4 tracks traffic from AI-powered search, enabling measurement of AI visibility impact on business outcomes.

Schema markup, structured data implementation, and knowledge graph optimization require technical SEO platforms but deliver outsized returns in AI visibility. AI systems rely heavily on structured information to understand entities, relationships, and authority, making proper markup crucial for accurate representation in generated answers.

How to Start Learning

Audit current brand representation in AI systems by querying ChatGPT, Perplexity, and Google about the organization, products, and expertise areas. Document what information appears, what’s missing, what’s inaccurate, and which sources get cited. This baseline reveals optimization priorities—correcting misinformation, increasing citation frequency, or expanding topic coverage.

Implement structured data across digital properties, particularly schema markup for organizations, products, articles, and FAQs. Create citation-worthy content that AI systems recognize as authoritative—in-depth guides, original research, expert perspectives, and comprehensive resources. Focus on entity clarity rather than keyword density, ensuring that content clearly establishes expertise, relationships, and topical authority. Advanced AI SEO involves actively managing knowledge graph presence, cultivating authoritative backlinks that AI systems trust, and continuously monitoring AI representation to identify and correct emerging issues.

11. AI Systems Thinking

AI systems thinking is the practice of designing integrated architectures that combine data infrastructure, AI models, automation workflows, and human oversight into cohesive, scalable solutions rather than treating AI as isolated tools. This approach views AI capabilities as components within larger systems that must handle data flow, error recovery, quality assurance, cost management, and continuous improvement. Systems thinking prioritizes reliability, maintainability, and business outcomes over impressive demonstrations or cutting-edge techniques.

Most AI implementations remain at the proof-of-concept stage because teams focus on model capabilities without designing systems around them. A marketing team might use ChatGPT effectively for individual tasks but struggle to scale content production because they lack infrastructure for prompt management, quality control, brand consistency, and workflow integration. AI systems thinking for founders involves architecting solutions that work reliably at scale, not just impressively in demos.

Why It Matters

Individual AI capabilities don’t create lasting competitive advantage—systems do. Competitors can access the same models, tools, and techniques. Differentiation comes from designing systems that combine capabilities effectively, maintain quality consistently, and adapt to changing requirements. An e-commerce company’s product description generator isn’t valuable because it uses GPT-4; it’s valuable because the system ingests product data automatically, maintains brand voice, optimizes for SEO, publishes to multiple channels, and continuously improves based on performance metrics.

Systems thinking also prevents technical debt accumulation. Ad-hoc AI implementations create maintenance burdens as prompts drift, models change, costs escalate, and quality degrades. Systematic architecture includes version control, monitoring, testing, documentation, and explicit ownership—treating AI systems as software that requires engineering discipline rather than as magic that works automatically.

Tools and Platforms

Zapier and Make serve as workflow orchestration platforms, connecting AI capabilities to data sources, business applications, and distribution channels. Miro provides visual collaboration for system design, enabling teams to map data flows, identify dependencies, and document architectures. Airtable combines database functionality with automation, creating lightweight infrastructure for managing AI workflows, tracking outputs, and monitoring performance.

The platforms matter less than the architectural thinking they enable. Successful AI systems thinking starts with defining business requirements, mapping information flows, identifying quality requirements, and designing solutions that treat AI as one component within larger technical and operational systems.

How to Start Learning

Select a specific business process currently involving manual AI usage. Document every step: where data originates, what transformations occur, how quality gets evaluated, where outputs go, what happens when things fail. Map this as a system diagram showing data flows, decision points, and handoffs between components. Identify which parts can be automated, which require AI capabilities, which need human oversight, and which involve technical integration.

Design a systematic solution rather than a workflow. How does the system maintain prompt versions? How does it detect quality degradation? How does it scale beyond one user? How does it recover from failures? How does it evolve as requirements change? These questions reveal the difference between using AI tools and building AI systems. Advanced systems thinking eventually encompasses infrastructure, security, compliance, cost optimization, and organizational change management—recognizing that technical capabilities alone don’t create business value without operational systems that deploy them effectively.

12. AI Narrative Control

AI narrative control refers to deliberately shaping the information signals, structured data, and content patterns that AI systems use to understand, interpret, and represent brands, products, and expertise areas. Unlike passive SEO that reacts to algorithm changes, narrative control proactively manages the inputs AI systems rely on—knowledge bases, citation sources, entity relationships, and authoritative content—to influence how AI platforms characterize organizations when generating responses. This practice acknowledges that AI-generated content about brands will exist regardless of participation, making active narrative management necessary rather than optional.

The challenge stems from AI systems synthesizing information from diverse sources without clear visibility into weighting or selection criteria. A brand might have excellent content but poor knowledge graph representation, leading to inaccurate or incomplete AI-generated descriptions. Alternatively, outdated information or competitor content might dominate AI training data, shaping narratives contrary to current positioning. Brand optimization for AI search engines requires understanding how AI systems construct knowledge about entities and deliberately managing those signals.

Why It Matters

AI platforms increasingly mediate customer relationships. Potential customers ask ChatGPT or Perplexity for recommendations, explanations, and comparisons rather than searching directly. What AI systems “know” about a brand shapes purchase decisions, partner selection, hiring outcomes, and reputation. Losing control of this narrative means accepting whatever synthesis AI systems generate from available information, regardless of accuracy or strategic alignment.

Early narrative control creates compounding advantages. AI systems weight authoritative, well-structured information more heavily than scattered mentions. Organizations that establish clear entity representation, comprehensive structured data, and citation-worthy content become the default sources AI systems reference, creating self-reinforcing visibility. Competitors trying to displace established narratives face significant headwinds as AI systems develop “knowledge” about competitive landscapes.

Tools and Platforms

Searchable provides brand monitoring across AI platforms and narrative optimization recommendations. Wikidata offers structured knowledge base management, enabling direct contribution to one of AI systems’ primary knowledge sources. Google Search Console tracks how Google’s AI features use and represent content, providing feedback on narrative effectiveness.

Knowledge graph management, schema implementation, and citation building remain the core technical components. These aren’t specialized tools but rather systematic approaches to structuring information AI systems consume when building understanding about entities and relationships.

How to Start Learning

Audit current AI narratives by querying multiple platforms about the organization from different angles: company description, product explanations, competitive positioning, expertise areas, and factual accuracy. Compare AI-generated responses to intended messaging and identify gaps, inaccuracies, or missed opportunities. This reveals the current narrative baseline and prioritizes improvement areas.

Systematically build authoritative content that establishes clear entity relationships, expertise demonstrations, and factual grounding. Update Wikipedia and Wikidata entries with well-sourced information. Implement comprehensive schema markup across digital properties. Create citation-worthy resources that AI systems recognize as authoritative sources. Narrative control is a sustained practice, not a project—requiring ongoing monitoring, content development, and signal management as AI systems evolve. Advanced practitioners eventually develop early-warning systems that detect narrative shifts, proactive strategies for managing competitive positioning in AI contexts, and systematic approaches to becoming the definitive source for specific expertise areas.

Comparison Table: AI Skills at a Glance

Understanding the relative difficulty, business impact, and best applications of each AI skill helps prioritize learning paths based on career stage, role, and organizational needs. Some skills deliver immediate value with minimal technical complexity, while others require significant investment but create lasting competitive advantages. The following comparison provides a framework for strategic skill development rather than attempting to master every capability simultaneously.

| Skill | Difficulty Level | Business Impact | Best For | Learning Curve |

| Prompt Engineering | Beginner | High | Content creators, marketers, general practitioners | Days to weeks |

| AI Workflow Automation | Beginner | High | Operations, small business owners, productivity-focused roles | Weeks |

| AI Agents | Intermediate | Very High | Developers, operations teams, task automation specialists | Months |

| Retrieval Augmented Generation | Intermediate | Very High | Knowledge workers, support teams, data-heavy organizations | Months |

| Fine-Tuning and Custom GPTs | Advanced | Very High | Developers, ML engineers, specialized domain applications | Months to years |

| Multimodal AI | Beginner | Medium | Content teams, creative professionals, integrated workflows | Weeks |

| AI Video Generation | Beginner | Medium | Marketing, content creation, social media management | Days to weeks |

| AI Tool Stacking | Intermediate | High | Productivity specialists, operations leaders, system architects | Weeks to months |

| LLM Evaluation and Management | Intermediate | High | Technical teams, quality assurance, production deployments | Months |

| AI SEO | Intermediate | Very High | Marketing teams, brand managers, growth leaders | Weeks to months |

| AI Systems Thinking | Advanced | Very High | Founders, technical architects, strategic decision-makers | Months to years |

| AI Narrative Control | Intermediate | Very High | Brand managers, executives, reputation management | Months |

Difficulty levels reflect the technical prerequisites and depth of knowledge required for competent execution, not the time to produce basic results. Many beginners can generate acceptable prompt engineering outputs quickly, but mastering the skill to professional standards takes sustained practice. Business impact assesses the potential value creation for organizations deploying these capabilities effectively, not the ease of demonstrating isolated results.

The “Best For” categories suggest primary roles where each skill creates immediate career leverage, though secondary applications exist across most functions. Learning curves estimate the time investment from initial exposure to practical competence, acknowledging that true expertise continues developing through sustained application. Organizations should prioritize skills based on strategic objectives rather than perceived ease, as capabilities with higher difficulty often deliver disproportionate competitive advantage.

How to Choose the Right AI Skill Based on Career Goals

Strategic AI skill development aligns with specific career trajectories rather than attempting comprehensive coverage. Different roles require different technical capabilities, and matching learning investments to professional objectives maximizes return on time and effort. The following guidance segments recommendations by career path, though many professionals will benefit from skills across multiple categories.

Founders and Executives should prioritize AI Systems Thinking and AI Narrative Control. Building sustainable AI-powered businesses requires architectural thinking that connects capabilities to business outcomes, not just impressive demonstrations. Understanding how to design reliable systems that scale, maintain quality, and create defensible advantages determines whether AI implementations deliver lasting value or become technical debt. Narrative control ensures brand representation in AI contexts matches strategic positioning as these platforms increasingly mediate customer relationships.

Marketing Professionals benefit most from AI SEO, AI Video Generation, and Prompt Engineering. Visibility in AI-powered search directly impacts customer acquisition as search behavior shifts toward conversational interfaces. Video generation removes production bottlenecks that limit content volume. Prompt engineering enables rapid content creation while maintaining brand consistency. These three skills combine to dramatically increase marketing leverage while reducing dependency on expanded teams or outsourced production.

Developers and Technical Teams should focus on Retrieval Augmented Generation, AI Agents, and LLM Evaluation. RAG systems transform unreliable AI experiments into production-ready information systems grounded in factual data. Agents enable autonomous task completion at scale, moving beyond assisted workflows to genuine automation. Evaluation frameworks ensure deployed systems maintain quality and cost-efficiency as usage scales. Technical professionals with these capabilities become AI infrastructure leaders rather than tool users.

Operations and Productivity Specialists gain maximum leverage from AI Workflow Automation, AI Tool Stacking, and Prompt Engineering. Operations roles involve coordinating information flow across systems, managing repetitive processes, and ensuring consistent execution—areas where AI automation delivers immediate, measurable returns. Tool stacking creates integrated systems from disconnected platforms. Prompt engineering enables effective AI delegation for knowledge work tasks. Mastering these skills enables operations professionals to reshape entire business processes rather than incrementally improving them.

Content Creators and Creative Professionals should develop Multimodal AI, AI Video Generation, and Fine-Tuning capabilities. Multimodal skills enable working across formats fluidly, compressing production timelines and expanding creative possibilities. Video generation democratizes motion content creation. Fine-tuning customizes AI systems to specific creative voices, styles, and domain expertise. These capabilities multiply creative output while maintaining quality and distinctiveness that generic AI tools cannot replicate.

Career stage also influences prioritization. Early-career professionals benefit most from accessible, high-impact skills like prompt engineering and workflow automation that deliver immediate productivity gains and demonstrate AI fluency to employers. Mid-career professionals should invest in specialized technical skills aligned with their domain—RAG for knowledge management, fine-tuning for domain expertise, evaluation frameworks for technical leadership. Senior professionals and executives need strategic capabilities like systems thinking and narrative control that inform organizational AI strategy rather than individual task execution.

How These AI Skills Improve Visibility in AI Search Engines

AI-powered search engines fundamentally change how organizations achieve discovery and prominence online. Traditional SEO optimizes for ranking positions in result lists. AI search requires becoming authoritative source material that AI systems cite when generating responses. The skills covered in this guide directly enable this transformation by improving the signals AI platforms use to evaluate expertise, trust, and relevance.

Entity signals form the foundation of AI understanding about organizations, products, and individuals. AI systems build knowledge graphs that map relationships between entities, establishing context for when and how to reference specific sources. Prompt engineering creates content that clearly establishes entity relationships. Structured data implementation through technical SEO makes these relationships machine-readable. AI narrative control deliberately manages knowledge graph presence, ensuring accurate representation across platforms. Together, these practices transform scattered mentions into coherent entity profiles AI systems recognize as authoritative.

Structured data enables AI platforms to extract factual information accurately rather than inferring meaning from unstructured text. Schema markup, which aligns with AI SEO best practices, provides explicit signals about content type, authorship, relationships, and factual claims. RAG systems demonstrate how structured information improves retrieval accuracy—the same principles apply to how AI search engines select and use source material. Organizations implementing comprehensive structured data gain representation advantages as AI systems increasingly prioritize information they can parse and verify.

Citation authority determines which sources AI systems trust when synthesizing answers. Traditional backlinks remain relevant but insufficient. AI platforms evaluate content depth, factual accuracy, consistent messaging, and domain expertise demonstrated across multiple sources. Creating citation-worthy content requires understanding what makes information authoritative to AI systems—comprehensive coverage, clear attribution, factual grounding, and technical accuracy. Skills like fine-tuning demonstrate domain expertise by creating AI systems that reflect specialized knowledge, indirectly signaling subject authority to AI search platforms.

Brand mentions across diverse, trusted sources create reinforcement effects where AI systems develop confident understanding about organizations and offerings. Workflow automation enables consistent content distribution across platforms. AI tool stacking creates integrated publishing systems that maintain brand presence. Narrative control ensures consistency across these mentions, preventing conflicting signals that confuse AI understanding. Volume matters less than coherence—scattered, contradictory mentions undermine AI confidence while consistent messaging across sources reinforces authority.

Knowledge graphs synthesize information from structured databases, content analysis, and entity relationships into the conceptual frameworks AI systems use for understanding. Contributing directly to knowledge bases like Wikidata, maintaining accurate Wikipedia presence, and ensuring schema markup correctly represents organizational information all influence knowledge graph representation. Systems thinking approaches content architecture holistically, designing information structures that AI platforms can parse effectively rather than creating isolated content pieces.

AI discoverability ultimately depends on becoming the obvious, authoritative source for specific topics, use cases, or industry domains. This requires sustained investment across multiple skills rather than isolated optimization tactics. Organizations optimizing for ChatGPT search results need comprehensive content demonstrating expertise, structured data making that expertise machine-readable, citation patterns establishing trust, and narrative control ensuring accurate representation. The skills covered throughout this guide collectively enable this transformation from scattered online presence to coherent AI-readable authority.

The competitive advantage comes from integration rather than individual techniques. AI systems synthesize understanding from multiple signals simultaneously. Organizations that implement structured data but create shallow content lack citation authority. Brands with excellent content but poor entity representation struggle with accurate AI characterization. Comprehensive AI visibility requires systematic capability development across technical, content, and strategic domains—treating AI discoverability as an architectural challenge rather than a marketing tactic.

Conclusion

The AI skills landscape evolves rapidly, but the underlying capabilities covered here remain relevant regardless of which specific tools dominate in future years. Prompt engineering principles apply regardless of model architecture. Systems thinking guides effective AI integration independent of platform choices. Narrative control matters as long as AI systems mediate information access. Strategic skill development focuses on transferable expertise rather than tool-specific knowledge that becomes obsolete with the next model release.

Career differentiation increasingly comes from building with AI rather than using it. The baseline expectation shifts from “can operate ChatGPT” to “can architect AI systems that solve business problems reliably at scale.” This transition requires investments in technical capabilities, strategic thinking, and systematic implementation practices. The professionals developing these skills now create advantages that compound as AI capabilities expand, while those remaining at the usage level face growing commoditization as AI literacy becomes universal.

The path forward involves structured learning rather than trend chasing. Select skills aligned with career objectives. Build foundational understanding before attempting advanced applications. Develop systematic approaches through practice rather than accumulating surface knowledge through consumption. Treat AI capability as an ongoing professional development area rather than a checkbox skill to acquire. The organizations and individuals investing deliberately in deep AI capabilities position themselves for sustained relevance as artificial intelligence reshapes professional work across every industry.

Frequently Asked Questions

What is the easiest AI skill to learn for beginners?

Prompt engineering represents the most accessible entry point into practical AI skills. The barrier to entry requires only access to tools like ChatGPT or Claude and understanding of clear communication principles. Beginners can achieve useful results within days by learning to structure instructions with context, constraints, and examples. Improvement comes through practice rather than theoretical knowledge, making it ideal for self-directed learning. The skill scales from basic usage to professional-grade implementation, providing a natural progression path as competence develops.

How long does it take to become proficient in AI workflow automation?

Achieving practical proficiency in AI workflow automation typically requires several weeks of consistent practice. The initial learning curve involves understanding workflow logic, trigger-action relationships, and platform-specific interfaces. Basic automations connecting two systems can be implemented within hours of starting. More sophisticated workflows incorporating conditional logic, data transformation, and error handling require weeks to master. Professional-grade implementations managing complex business processes may take several months to develop, particularly when designing systems that handle edge cases reliably and scale across organizational usage.

Do I need coding skills to build AI agents?

Building functional AI agents no longer requires traditional programming expertise, though technical thinking helps significantly. No-code platforms like those exploring how to build AI agents without coding abstract infrastructure complexity, enabling agent development through visual interfaces and configuration rather than code. Understanding logic, data flow, and system architecture matters more than syntax knowledge. However, developers with coding skills unlock more sophisticated capabilities—custom tools, advanced orchestration, and precise control over agent behavior. The choice between no-code and coded approaches depends on specific requirements rather than absolute capability limitations.

What’s the difference between RAG and fine-tuning?

Retrieval Augmented Generation and fine-tuning solve different problems with distinct approaches. RAG systems connect models to external knowledge bases, retrieving relevant information dynamically during response generation. This approach keeps information current, enables source attribution, and works without model retraining. Fine-tuning modifies model weights directly through continued training on domain-specific data, embedding knowledge and behavior patterns into the model itself. RAG excels when information changes frequently or source attribution matters. Fine-tuning suits cases requiring consistent specialized behavior, particular tone, or deep domain expertise that prompts cannot effectively convey.

Which AI skill has the highest career ROI right now?

AI Systems Thinking currently delivers the highest career return on investment for mid-to-senior professionals. While prompt engineering and workflow automation provide quick wins, systems thinking enables designing AI architectures that create lasting competitive advantages. Organizations struggle to find professionals who can architect reliable AI implementations rather than demonstrate impressive prototypes. This skill translates across industries, remains relevant despite tool changes, and positions professionals for leadership roles shaping organizational AI strategy. The capability scarcity combined with high organizational demand creates premium compensation opportunities for those developing systems-level AI expertise.

How do AI SEO and traditional SEO differ?

Traditional SEO optimizes for ranking positions in search result lists by targeting keywords, building backlinks, and improving technical site performance. AI SEO optimizes for citation and accurate representation in AI-generated responses by focusing on entity signals, structured data, comprehensive content, and citation authority. Rankings matter less than becoming trusted source material. Keyword targeting gives way to topic authority and entity clarity. AI search engine optimization strategies emphasize machine-readable information structure and factual accuracy over engagement metrics. Both disciplines share technical foundations but optimize for fundamentally different success criteria—visibility through ranking versus authority through citation.

What are the best resources for learning prompt engineering?

Learning how to learn prompt engineering for beginners starts with hands-on practice rather than theoretical study. OpenAI’s documentation provides foundational concepts and best practices directly from model creators. Anthropic’s prompt engineering guide covers advanced techniques for Claude specifically. Scale AI’s prompt engineering guide offers comprehensive frameworks for systematic prompt development. Beyond formal documentation, building a personal library of successful prompts and studying high-quality examples reveals patterns more effectively than courses. Practice with real tasks, document what works, analyze failures systematically, and develop mental models for how models interpret different instruction types.

How can small businesses benefit from AI workflow automation tools?

AI workflow automation tools for small businesses eliminate manual work that doesn’t scale with team size. A three-person operation can achieve operational efficiency comparable to organizations ten times larger by automating repetitive processes. Customer onboarding, lead qualification, invoice processing, inventory management, and communication workflows run automatically rather than consuming staff time. The cost barrier has dropped significantly—most automation platforms charge based on usage rather than requiring enterprise contracts. Small businesses gain disproportionate advantages because automation removes growth bottlenecks that would otherwise require hiring, enabling revenue scaling without proportional cost increases.